I've been playing once again with a Coursera class on machine learning.

The most recent lesson was about clustering and dimension reduction in data sets.

Dimensionality

When we look at the world, we categorize items (people, books, music, etc) based on their individual characteristics. When certain items are extremely complex, we automatically reduce the number of characteristics to a more manageable one to aid in our categorization.

When if comes to categorizing people, this can be a dangerous step to take - no one wants to have their person reduced to a set of more general characteristics than their real, complex character. For items like books, TV shows, computer hardware, and blog posts, though, it's hugely helpful.

Take a look at just about any blog/news site on the web and you'll see a finite set of taxonomical terms used to describe each entry. It's a simple way to reduce a body of content that could span thousands of individual entries into discrete, (hopefully) related buckets.

Clustering

The problem with explicit categorization is that it could be misleading. You're interested in one or two articles I write on PHP unit testing, but that doesn't mean you care at all about dynamic data encryption - on this site, articles about each would fall into the "Technology" category. It's my attempt to help you isolate content you actually want to read from all the work I want to write, but it's a clumsy method.

We use tools like Zemanta and Jetpack to attempt to scan and identify related content (for suggesting other articles that might interest you), but the magic of their algorithms remains hidden in the black box of their third-party systems. I'd love the opportunity to use c clustering algorithm of my own to categorize and identify (potentially) related content.

Clustering is the practice of identifying "groups" of elements based on a defined set of features. Every word and sentence in an article could be a "feature" in this situation, and dimension reduction can help us optimize the learning algorithm to run quickly on what could be a very large set of data.

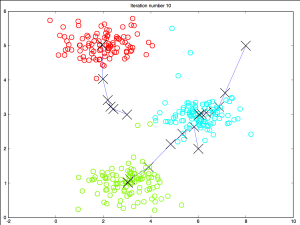

[caption id="attachment_6531" align="alignright" width="300"] This chart represents the iterative process of K-means run through 10 iterations on a sample data set.[/caption]

This chart represents the iterative process of K-means run through 10 iterations on a sample data set.[/caption]

K-means classification, for example, starts with a randomly initialized set of "groups." The algorithm then classifies all of the given data set based on the group that each point is closest to. Then it redefines the center of the group as the mean of that group's members. The classification routine runs these steps iteratively to narrow down on a stable, optimized set of data groups.

Ignoring a visual plot of data (I strongly hope my writing has more than two definable dimensions against which to categorize), it would be fairly straight-forward to use a tool like K-means classification to identify trends and "groups" on a site. The only downside is that these groups wouldn't necessarily line up with human-identifiable classifiers - that's to say the final groupings wouldn't be discrete, label-worthy categories.

The process would allow us to reliably identify groups of related content, though, potentially improving upon (or even outright replacing) third-party hosted "related post" plugins and tools.