When I explain to people that I enjoy writing code in C# more than PHP, they scratch their collective heads and, with a quizzical look, always respond, "why?"

My biggest reason: I really enjoy working with parallel processing.

Multitasking

Before I get to computers, lets use humans as a model for understanding what's going on. Humans are really bad at multitasking.[ref]Some people will claim they're actually very good multitaskers. I say they're lying. I have not ever met anyone who's actually good at multitasking. Ever.[/ref] There are studies that disprove claims of being good at this, so lets accept for now that humans are a good model for something that can only do one thing at a time.

The only problem, humans are also expected to perform multiple tasks in parallel all the time. Take for example a developer - call him Bob - who runs a 1-man agency.

Bob is doing very well for himself. He has 3 steady clients and is making a high enough margin to keep the bills paid and still have a life outside of his 9-5. Except, Bob wants to expand. He wants to grow his little agency out of the home office - but he's not bringing in enough capital to hire a contractor just yet.

Instead, Bob takes on 2 more clients. Unfortunately, having five clients demand Bob's time leads to chaos. He's still expected to perform the same work for the existing 3 clients, but is now demanded to switch contexts from one client to another more frequently just to keep up.

Bob is a single-threaded application. He can only do one thing at a time, so managing 5 different workloads requires constant shuffling of priorities. Deliverable ship more slowly and Bob has trouble keeping up with demand.

Finally, there's enough money in the bank for Bob to hire Cindy. The two of them split the workload fairly evenly: Bob keeps the original 3 clients, Cindy takes the 2 newer ones. Both developers have to switch contexts less frequently, so they're more efficient with their deliverables and start shipping code on-time once again.

Computer Applications

Back in the day, computers had a single physical core - the CPU - with which to handle any instructions they were tasked to process. The advent of personal computers brought along with it the demand that a computer do two things at once.

Tasks on a computer are split across threads. Each thread corresponds roughly to a single thread, and a traditional CPU core can only process one thread at a time. If you have a newer machine with multiple physical cores, then your machine is capable of processing multiple threads at the same time.

Newer technologies like hyper-threading allow for virtual threads to run on a machine that otherwise couldn't handle the additional workload. These technologies make our machines faster and, like Bob hiring Cindy, equate to managing many simultaneous tasks more efficiently.

On the Web

Unfortunately for WordPress developers, our jobs consist of working with PHP and JavaScript. Neither language supports threading - that is both are single-threaded languages that, like humans, suck at multitasking.

In JavaScript, we can fake threading using [cci]setTimeout[/cci] to break out of the current execution cycle and simulate the effect of doing something in the background. Unfortunately, this isn't really a threaded task. The browser (that is the UI and the JavaScript engine) all works on 1 thread inside your machine. If you've ever had a script crash a browser because it took too long, you've run into this limitation head-on.

With PHP, we can attempt to fork the process by, usually, spinning up a separate PHP instance and offloading some of our data processing to that process. Again, this isn't real threading - you're using a separate process to handle the processing rather than juggling multiple threads within your application.

I enjoy working with C# (and all of the .Net family, actually) because it natively supports threading. C# natively supports a thread pool, which allows our application to:

- Offload certain tasks to a background process

- Register a callback function within the application that is invoked when processing is complete

Item #2 is what's lacking in the PHP spin-off-a-separate-process world. We can offload tasks to another process, but we are blind to when that processing is complete.[ref]Ultimately, you could hack something here with shared memory between the processes and just monitor things to see when the processing is finished. This is a sloppy approach to parallel processing.[/ref]

JavaScript will actually allow you to do both - achieving real parallel processing - but this requires use of the new Web Worker API and has a few other caveats as well.[ref]I presented on this topic at jQuery Russia, and hope to keep talking about it at JavaScript conferences to come. The discussion on Web Workers is a bit heavy for this particular post, though.[/ref]

Practical Solutions

As much as I like C# for its native support, I still use PHP in my day job and am reduced to using some of the hacks I list above to work around its lack of any real support. The most straight-forward approach I've worked with thus far is a message queue.

The main application needs to process a lot of data using a long-running process. It takes this data and segments it down to the smallest units of work that can conceivably be processed in parallel and adds each to a queue.[ref]I use a library called Gearman to power this particular part of the process.[/ref]

A separate pool of worker tasks - each a separate instance of a single application - is listening for when new jobs are added to the queue. When data is present, the next available worker grabs it from the queue and goes to work. When everything's done, I have the worker process write to a log - often just recording execution time and some ID for the job performed - so the parent application can keep track of what's been done, when.

It's not as elegant as [cci]ThreadPool.QueueUserWorkItem()[/cci], but it gets the job done.

Limitations

Once you start working with parallel processing, there are several things you should keep in mind.

First, you're essentially managing several applications at the same time now. Many of these applications are completely isolated from the main thread's workflow and won't automatically stop execution if the main thread closes things down early. If you don't closely manage how worker processes are created, queued, and destroyed your system will quickly grind to a halt under the burden of rogue processes.

Second, communication between functions is drastically different. In the WordPress world, we often trigger events and pass data using actions, filters, and even global variables. Function calls (actions and filters) in one process, however, will not affect any others. Likewise, every new worker process possesses a completely siloed scope (global variables are meaningless).

Finally,[ref]I say finally because these are the three biggest "gotchas" for developers new to using parallelism in their workflow. There are several other, more nuanced obstacles to cleanly implementing code in parallel that would take another hundred posts to cover.[/ref] the law of diminishing returns comes into play quite quickly depending on your system architecture. Said another way, just queueing up a hundred new worker threads will not make your application run a hundred times more quickly.

Think back to Bob and Cindy. Both of them are, essentially, separate worker threads in an application (their agency) managing some long-running data processing (client work). They have a fairly small office that suits the two of them quite nicely. Assume, however, that Bob gets really excited about a new contract and goes to hire another 20 developers. They all start at the same time and now there are 22 developers sharing the same workspace.

Elbows bump. Papers are lost. Client work grinds to a halt as the new developers fight over workspace.

Your threads - your worker processes - will behave the same way. Spin up more workers than you have real threads available on your machine and you begin to lose the benefit of partitioning work out among workers in the first place. It's not a hard-and-fast rule, though. You will need to tweak your application and its use of background processes to make the best use of the resources you have available.

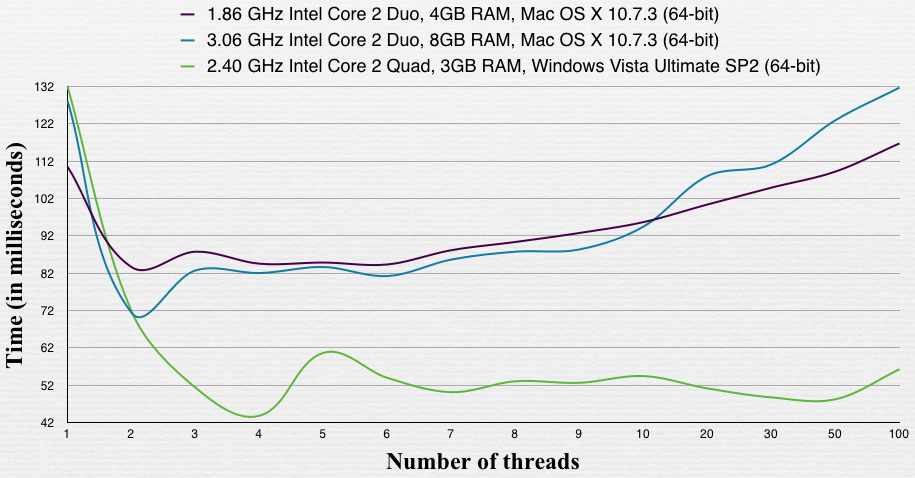

As an example, consider the chart below (taken from a great discussion about parallel processing on Stack Overflow):

This compares two dual-core processors against a quad-core processor, using a variable number of threads to process data in a single, large array (the time on the left is total time for the entire operation). Note what happens as the number of threads (read: background processes) increases above the number of physical cores available. If you're using background processing on your system, you will definitely want to run a similar profile to be sure your application is properly tuned.

So why do I like C#? Because it makes all of the above easy. Why do I stick with PHP and JS? Because I can still accomplish the same tasks - it just takes a bit more forethought before I kick off the project.

Do you use parallel processing in any of your projects? Have you considered all of the above? What other advice would you add for developers trying to wrap their heads around it?